Microprocessors : Introduction,Architecture Basics ,Complex Instruction Set Computer (CISC) and Reduced Instruction Set Computer (RISC) Processors , LogicalSimilarity , Short Chronology of Microprocessor Development,The Intel Family of Microprocessors , The Motorola Family of Microprocessors and RISC Processor Development

Microprocessors

In the simplest sense, a microprocessor may be thought of as a central processing unit (CPU) on a chip. Technical advances of microprocessors evolve quickly and are driven by progress in ultra large-scale integrated/very large-scale integrated (ULSI/VLSI) physics and technology; fabrication advances, including reduction in feature size; and improvements in architecture.

Developers of microprocessor-based systems are interested in cost, performance, power consumption, and ease of programmability. The latter of these is, perhaps, the most important element in bringing a product to market quickly. A key item in the ease of programmability is availability of program development tools because it is these that save a great deal of time in small system implementation and make life a lot easier for the developer. It is not unusual to find small electronic systems driven by microcontrollers and microprocessors that are far more complex than need be for the project at hand simply because the ease of development on these platforms offsets considerations of cost and power consumption. Development tools are, therefore, a tangible asset to the efficient implementation of systems, utilizing microprocessors. Often, a more general purpose microprocessor has a companion microcontroller within the same family. The subject of microcontrollers is a subset of the broader area of embedded systems. A microcontroller contains the basic architecture of the parent microprocessor with additional on-chip special purpose hard- ware to facilitate easy small system implementation. Examples of special purpose hardware include analog to digital (A/D) and digital to analog (D/A) converters, timers, small amounts of on-chip memory, serial and parallel interfaces, and other input output specific hardware. These devices are most cost efficient for development of the small electronic control system, since all externally needed hardware is already designed onto the chip. Component count external to the microcontroller is therefore kept to a minimum. In addition, the advanced development tools of the parent microprocessor are often available for programming. Therefore, a potential product may be brought to market much faster than if the developer used a more general purpose microprocessor, which often requires a substantial amount of external support hardware.

2. Architecture Basics

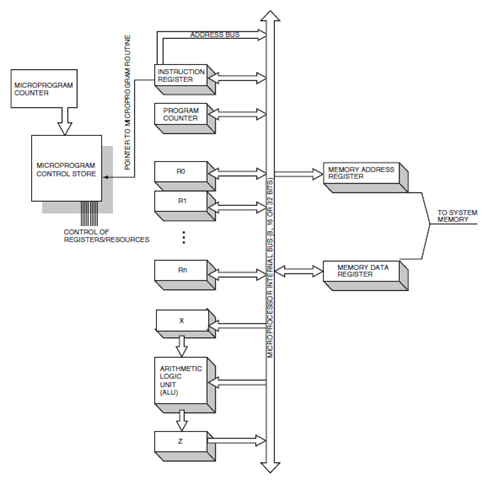

In the early days of mainframe computers, only a few instructions (e.g., addition, load accumulator, store to memory) were available to the programmer and the CPU had to be patched, or its configuration changed, for various applications. Invention of microprogramming, by Maurice Wilkes in 1949, unbound the instruction set. Microprogramming made possible more complex tasks by manipulating the resources of the CPU (its registers, arithmetic logic unit, and internal buses) in a well-defined, but programmable, way. In this type of CPU the microprogram, or control store, contained the realization of the semantics of native machine instructions on a particular set of CPU internal resources. Microprogramming is still in strong use today. Within the microprogram resides the routines used to implement more complex instructions and addressing modes. It is this scheme that is still widely used and a key element of the complex instruction set computer(CISC) microprocessor.

Evolution from the original large mainframe computers with hard-wired logical paths and schemes for instruction manipulation toward more flexible and complex instruction sets and addressing modes was a natural one. It was driven by advances in semiconductor fabrication technology, memory design, and device physics. Advances in semiconductor fabrication technology have made it possible to place the CPU on a monolithic integrated circuit along with additional hardware. With the advent of programmable read-only memory, the microprogram was not even bound to the whims of the original designer. It could be reprogrammed at a later date if the manipulation of resources for a particular task needed to be mod- ified or streamlined. In the simplest view, microprocessors became more and more complex with larger instruction sets and many addressing modes. All of these advances were welcomed by compiler writers, and the instruction set complexity was a natural evolution of advances in component reliability and density.

Complex Instruction Set Computer (CISC) and Reduced Instruction Set Computer (RISC) Processors

The strength of the microprocessor is in its ability to perform simple logical tasks at a high rate of speed. In Complex instruction set computer (CISC) microprocessors, small register sets, memory to memory operations, large instruction sets (with variable instruction lengths), and the use of microcode are typical. The basic simplified philosophy of the CISC microprocessor is that added hardware can result in an overall increase in speed. The penultimate CISC processor would have each high-level language statement mapped to a single native CPU instruction. Microcode simplifies the complexity somewhat but necessitates the use of multiple machine cycles to execute a single CISC instruction. After the instruction is decoded on a CISC machine, the actual implementation may require 10 or 12 machine cycles depending on the instruction and addressing mode used. The original trend in microprocessor development was toward increased complexity of the instruction set. Although there may be hundreds of native machine instructions, only a handful are actually used. Ironically, the CISC instruction set complexity evolves at a sacrifice in speed because its harder to increase the clock speed of a complex chip. Recently, recognition of this along with demands for increased clock speeds have yielded favor toward the reduced instruction set (RISC) microprocessor.

This trend reversal followed studies in the early 1970s that showed that although the CISC machines had plenty of instructions, only relatively few of these were actually being used by programmers. In fact, 85% of all programming consists of simple assignment instructions) (i.e., A=B).

RISC machines have very few instructions and few machine cycles to implement them. What they do have is a lot of registers and a lot of parallelism. The ideal RISC machine attempts to accomplish a complete instruction in a single machine cycle. If this were the case, a 100-MHz microprocessor would execute 100 million instructions per second. There are typically many registers for moving data to accomplish the goal of reduced machine cycles. There is, therefore, a high degree of parallelism in a RISC processor. On the other hand, CISC machines have relatively few registers, depending on multiple machine cycle manipulation of data by the microprogram. In a CISC machine, the microprogram handles a lot of complexity in interpreting the native machine instruction. It is not uncommon for an advanced microprocessor of this type to have over 100 native machine instructions and a slew of addressing modes.

The two basic philosophies, CISC and RISC, are ideal concepts. Both philosophies have their merits. In practice, microprocessors typically incorporate both philosophical schemes to enhance performance and speed. A general summary of RISC vs CISC is as follows. CISC machines depend on complexity of programming in the microprogram, thereby simplifying the compiler. Complexity in the RISC machine is realized by the compiler itself.

Logical Similarity

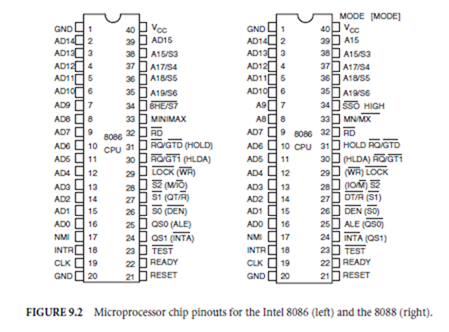

Microprocessors may often be highly specialized for a particular application. The external logical appearance of all microprocessors, however, is the same. Although the package itself will differ, it contains connection pins for the various control signals coming from or going to the microprocessor. These are connected to the system power supply, external logic, and hardware to made up a complete computing sys- tem. External memory, math-processors, clock generators, and interrupt controllers are examples of such hardware and all must have an interface to the generic microprocessor. A diagram of a typical micropro- cessor pinout and the common grouping of these control signals is shown in Fig. 9.1 and microprocessor printout for Intel® 8086 and 8088 processors are shown Fig. 9.2. Externally, there are signals, which may be grouped as addressing, data, bus arbitration and control, coprocessor signals, interrupts, status, and miscellaneous connections. In some cases, multiple pins are used for connections such as the case for the address and data buses.

Just as the logical connections of the microprocessor are the same from family to family, so are the basic instructions. For instance, all microprocessors have an add instruction, a subtract instruction, and a memory read instruction. Although logically, these instructions all are the same, the particular microprocessor realizes the semantics of the instruction (e.g., ADD) in a very unique way. This implementation of the ADD depends on the internal resources of the CPU such as how many registers are available, how many internal buses there are, and whether the data and address information may be separated on travel along the same internal paths.

To understand how a microprogram realizes the semantics of a native machine language instruction in a CISC machine, refer to Fig. 9.3. The figure illustrates a very simple CISC machine with a single bus, which is used to transfer both memory and data information on the inside of the microprocessor. Only one instruction may be operated on at a time with this scheme, and it is far simpler than most microprocessors available today, but it illustrates the process of instruction implementation. The process begins by a procedure (a routine in control store called the instruction fetch) that fetches the next contiguous instruction in memory and places its contents in the instruction register for interpretation. The program counterregister contains the address of this instruction, and its contents are placed in the memory address

Specific steps (micro-operations), which direct the microprocessor to fetch the next instruction from memory, are included with every native instruction. The instruction fetch procedure is an integral part of every native instruction and represents the minimum number of machine cycles that a microprocessor must go through to implement even the simplest instruction. Even a halt instruction, therefore, requires several machine cycles to implement.

The advantages of the CISC scheme are clear. With a large number of instructions available, the programmer of a compiler or high-level language interpreter has a relatively easy task and a lot of tools at hand. These include addressing mode schemes to facilitate relative addressing, indirect addressing, and modes such as autoincrementing or decrementing. In addition, there are typically instructions for memory to memory data transfers. Ease of programming, however, does not come without a price. The disadvantages of the CISC scheme are, therefore, relatively clear. Implementation of a particular instruction is bound to the microprogram, may take too many machine cycles, and may be relatively slow. The CISC architectural scheme also requires a lot of real estate on the semiconductor chip. Therefore, size and speed (which are really one and the same) are sacrificed for programming simplicity.

The von Neumann architecture was once the basis of most all CISC machines. This scheme is characterized by a common bus for data and address flow linking a small number of registers. The number of registers in the von Neumann machine varies depending on design, but typically consists of about 10 registers including those for general program data and addresses as well as special purposes such as instruction storage, memory addressing, and latching registers for the arithmetic logic unit (ALU). The basic architecture of the von-Neumann machine is illustrated in Figure 8.68. Recent advances, however, have led to a departure of CISC machines from the basic single bus system.

Short Chronology of Microprocessor Development

The first single chip CPU was the Intel 4004 developed for calculators. It processed data in 4 bits, and its instructions were 8 bits long. Program and data were separate. The 4004 had 46 instructions, a 4 level stack, 12-b program counter, and 16, 4-b registers. Later in 1972, the 4004’s successor, the 8008, was introduced,

which was followed by the 8080 in 1974. The 8080 had a 16-b address bus and an 8-b data bus, seven, 8-b registers, a 16-b stack pointer to memory, and a 16-b program counter. It also had 256 input/output (I/O) ports so that I/O devices did not take up memory space and could be addressed more directly. The design was updated in 1976 (the 8085) to only require a +5 V supply.

Zilog in July 1976 introduced the Z-80, which was intended to be an improved 8080. It also used 8-b data and 16-b address, could execute all of the opcodes of the 8080 and added 80 more instructions. The register set was doubled and consisted of two banks, which could be switched. Two index registers (IX and IY) allowed for more complex memory instructions. Probably the most successful feature of the Z-80 was its memory interface. Until its introduction, dynamic random-access memory (RAM) had to be refreshed with rather complex external circuitry, which made small computing systems more complex and expensive. The Z-80 was the first chip to incorporate this refreshing capability on-chip, which increased its popularity among system developers. The Z-8 was an embedded processor similar to the Z-80 with on-chip RAM and read-only memory (ROM). It was available in clock speeds to 20 MHz and was used in a variety of small microprocessor-based control systems.

The next processor of note in the chronology was the 6800 in 1975. Although introduced by Motorola, MOS Technologies gained popularity through introducing its 650x series, principally, the 6502, which was used in early desktop computers (Commodores, Apples, and Ataris). The 6502 had very few registers and was principally an 8-b processor with a 16-b address bus. The Apple II, one of the first computers introduced to the mainstream consumer market, incorporated the 6502. Subsequent improvements in the Apple line of micros were downward compatible with the 6502 processor. The extension to the 6502 came in 1977 when Motorola introduced the 6809 with two 8-b accumulators, which could combine mathematics operations in a single 16-b combination. It had 59 instructions. Members of the 6800 family live on in embedded microcontrollers such as the 68HC05 and 68HC11. These microcontrollers are still popular for small control systems. The 68HC11 was extended to 16-b and named the 68HC16. Radiation hardened versions of the 68HC11 have been used in communications satellites.

Advanced micro devices (AMD) introduced a 4-b bit-sliced microprocessor, the Am2901. Bit-sliced processors were modular in that they could be assembled to form larger word sizes. The Am2901 had a 4-b ALU; 16, 4-b registers; and the hardware to connect carry/borrow signals between adjacent modules. In 1979, AMD developed the first floating point coprocessor for microprocessors. The AMD 9511 arithmetic circuit was used in some CP/M, Z-80-based systems and some systems based on the S-100 bus.

Around 1976, competition was heating up for the 16-b microprocessor market. The Texas Instruments (TI) TM9900 was one of the first truly 16-b microprocessors and was designed as a single chip version of the TI 990 minicomputer. The TM9900 had two 16-b registers, good interrupt handling capability, and a decent instruction set for compiler developers. An embedded version (the TMS 9940) was also produced by TI. In 1976, the stage was being set for IBM’s choice of a microprocessor for its IBM-PC line of personal computers. Several 16-b microprocessors around at the time had much more powerful features and more straightforward open memory architectures (such as Motorola’s 68000). It is rumoured that IBM’s own engineers wanted to use the 68000 but at the time IBM had already negotiated the rights to the Intel 8086. Apparently, the choice of the 8-b 8088 was a cost decision because the 8088 could have used the lower cost support chips associated with the 8085, whereas 68000 components were more expensive and not readily available.

Around 1976, Zilog introduced the Z-8000 (shortly after the 8086 by Intel). It was a 16-b microprocessor that had the capability of addressing up to 23-b of address data. The Z-8000 had 16, 16-b registers. The first 8 could be used as 16, 8-b registers, or all 16 could be used as 8, 32-b registers. This offered great flexibility in programming and for arithmetic calculations. Its instruction set included a 32-b multiply and divide instruction. It, also, like the Z-80 had memory refresh circuitry built into the chip. Probably most important, however, in the CPU chronology, is that the Z-8000 was the first microprocessor to incorporate two different modes of operation. One mode was strictly reserved for use by the operating system. The other mode was a general purpose user mode. The use of this scheme improved stability, in that the user could not crash the system as easily, and opened up the possibility of porting the chip towards multitasking, multiuser operating systems such as UNIX.

The Intel Family of Microprocessors

Intel was the first to develop a CPU on a chip in 1971 with the 4004 microprocessor. This chip, along with the 8008, was commissioned for calculators and terminal control. Intel did not expect the demand for these units to be high and several years later, developed a more general purpose microprocessor, the 8080, and a similar chip with more onboard hardware, the 8085. These were the industry’s first truly general CPU’s available to be integrated into microcomputing systems. The first 16-b chip, the 8086, was developed by Intel in 1978 and was the first industry entry into the realm of the 16-b processors. A companion mathematic coprocessor, the 8087, was developed for calculations requiring higher precision than the 16-b registers the 8086 would offer. Shortly after developing the 8086 and the 16-b address/8-b data version the 8088, IBM chose the 8088 for its IBM PC microcomputers. This decision was a tremendous boon to Intel and its microprocessor efforts. In some ways, it has also made the 80×86 family a victim of its early success, as all subsequent improvements and moves to larger data and address bus CPUs have had to contend with downward compatibility.

The 80186 and 80188 microprocessors were, in general, improvements to the 8086 and 8088 and incorporated more on-chip hardware for input and output support. They were never widely used, however, most likely due to the masking effect of the 8088’s success in the IBM PC. The 80186 is architecturally identical to the 8086 but also contains a clock generator, a programmable controlled interrupt, three 16-b programmable timers, two programmable DMA controllers, a chip select unit, programmable control registers, a bus interface unit, and a 6-byte prefetch queue. The 80188 scheme is the same, with the exception that it only has an 8-b external data bus and a 4-byte prefetch queue.

None of the processors of the Intel family up to the beginning of the 1980s had the ability to address more than 1 megabyte of memory. The 80286, a 68-pin microprocessor, was developed to cater to the needs of systems and programs that were evolving, in a large part, due to the success of the 8088. The 80286 increased the available address space of the Intel microprocessor family to 16 megabytes of memory. Also, beginning with the 80286, the data and address lines external to the chip were not shared. In earlier chips, the address pins were multiplexed with the data lines. The internal architecture to accomplish this was a bit cumbersome, however, was kept so as to include downward compatibility with the earlier CPUs. Despite the scheme’s unwieldiness, the 80286 was a huge success.

In a decade, the evolution of the microprocessor had advanced from its earliest beginnings (with a 4-b CPU) to a true 16-b microprocessor. Many everday computing tasks were off loaded from mainframes to desktop machines. In 1985 Intel developed a true 32-b processor on a chip, the 80386. It was downward compatible with all object codes back to the 8008 and continued to lock Intel into the rather awkward memory model developed in the 80286. At Motorola, the 68000 in some ways had a far more simple and straightforward open address space and was a serious contender for heavy computing applications being ported to desktop machines from their larger, mainframe cousins. It is for this reason that even today the 68000 is found systems requiring compatibility with UNIX operating systems. The 80386 was, nevertheless, highly successful. The 80386SX was a version of the 80386 developed with an identical package to the 80286 and meant as an upgrade for existing 80286 systems. It did so by upgrading the address but to 32 b but maintained the 16-b data bus of the 80286. A companion mathematic coprocessor (a floating point math unit (FPU)), the 80387, was developed for use with the 80386.

The 80386’s success prompted other semiconductor companies (notably AMD and Cyrix) to piggyback on its success by offering clones of the processors and thus alternative sources for its end users and system developers. With Intel’s addition of its 80486 in 1989, including full pipelines, on-chip caching and an integrated rather than separate floating point processor, competition for the chips, popularity was fierce. In late 1993, Intel could no longer protect the next subsequent name in the series (the 80586). It trademarked the Pentium name to its 80586 processor. Because of its popularity, the 80×86 line is the most widely cloned.

The Motorola Family of Microprocessors

Alongside of Intel’s development of the 8080, Motorola developed the 6800, 8-b microprocessor. In the early 1970s, the 6800 was used in many embedded industrial control systems. It was not until 1979 however,

that Motorola introduced its 16-b entry into the industry, the 68000. The 68000 was designed to be far more advanced than the 8086 microprocessor by Intel in several ways. All internal registers were 32-b wide, and it had the benefit of being able to address all 16 megabytes of external memory without the segmentation schemes utilized in the Intel series. This nonsegmented approach meant that the 68000 had no segment registers, and each of its instructions could address the complete complement of external memory.

The 68000 was the first 16-b microprocessor to incorporate 32-b internal registers. This asset allowed its selection by designers who set out to port sophisticated operating systems to desktop computers. In some ways, the 68000 was ahead of its time. If IBM had chosen the 68000 series as the core chip for its personal computers, the present state-of-the-art of the desktop machine would be radically different. The 68000 was chosen by Apple for its MacIntosh computers. Other computer manufacturers, including Amiga and Atari chose it for its flexibility and its large internal registers.

In 1982, Motorola marketed another chip, the 68008, which was a stripped down version of the 68000 for low-end, low-cost products. The 68008 only had the capability to address 4 megabytes of memory, and its data bus was only 8-b wide. It was never very popular and certainly did not compete well with the 8088 by Intel (which was chosen for the IBM PC computers).

Advanced operating systems were ideal for the 68000 except that the chip had no capability for supporting virtual memory. For this reason, Motorola developed the 68010, which had the capability to continue an instruction after it had been suspended by a bus error. The 68012 was identical to the 68000 except that it had the capability to address 2 gigabytes of memory with its 30 address bus pins.

One of the most successful microprocessors introduced by Motorola was the 68020. It was introduced in 1984 and was the industry’s first true 32-b microprocessor. Along with the 32-b registers standard to the 68000 series, it has the capability of addressing 4 gigabytes of memory and a true 32-b wide data bus. It is still widely used.

The 68020 contains an internal 256 byte cache memory. This is an instruction cache, holding up to 64 instructions of the long-word type. Direct access to this cache is not allowed. It serves only as an advance prefetch queue to enable the 68020 to execute tight loops of instructions with any further instruction fetches. Since an instruction fetch takes time to process, the presence of the 256-byte instruction cache in the 68020 is a significant speed enhancement.

The cache treatment was expanded in the 68030 to include a 256-byte data cache. In addition, the 68030 includes an onboard paged memory management unit (PMMU) to control access to virtual memory. This is the primary difference in the 68030 and the 68020. The PMMU is available as an extra chip (the 68851) for the 68020 but included on the same chip with the 68030. The 68030 also includes an improved bus interface scheme. Externally, the connections of the 68020 and the 68030 are very nearly the same. The 68030 is available in two speeds, the MC68030RC16 at 16 MHz and the MC68030RC20 with a 20-MHz clock.

The 68000 featured a supervisor and user mode. It was designed for expansion and could fetch the next instruction during an instruction’s execution. (This represents 2-stage pipelining.) The 68040 had 6-stages of pipelining. The advances in the 680×0 series continued toward the 68060 in late 1994, which was a superscalar microprocessor similar to the Intel Pentium. It truly represents a merging of the two CISC and RISC philosophies of architecture. The 68060 10-stage pipeline translates 680×0 instructions into a decorded RISC-like form and uses a resource renaming scheme to reorder the execution of the instructions. The 68060 includes power saving features that can be shutdown and operates off of a 3.3-V power supply (again similar to the Intel Pentium processors).

RISC Processor Development

The major development efforts for RISC processors were led by the University of California at Berkeley and the Stanford University designs. Sun Microsystems developed the Berkeley version of the RISC processor [scalable processor architecture (SPARC)] for their high-speed workstations. This, however, was not the first RISC processor. It was preceeded by the MIPS R2000 (based on the Stanford University design), the Hewlett Packard PA-RISC CPU, and the AMD 29000.

The AMD 29000 is a RISC design, which follows the lead of the Berkeley scheme. It has a large set of registers spilt into local and global sets. The 64 global registers reduced instruction set processors were developed following the recognition that many of the CISC complex instructions were not being used.

Defining Terms

Cache: Small amount of fast memory, physically close to CPU, used as storage of a block of data needed immediately by the processor. Caches exist in a memory hierarchy. There is a small but very fast L1 (level one) cache; if that misses, then the access is passed on to the bigger but slower L2 (level two) cache, and if that misses, the access goes to the main memory (or L3 cache, if it exists).

Pipelining: A microarchitecture technique that divides the execution of an instruction into sequential steps. Pipelined CPUs have multiple instructions executing at the same time but at different stages in the machine. Or, the act of sending out an address before the data is actually needed.

Superscalar: Capable of executing multiple instructions in a given clock cycle. For example, the Pentium processor has two execution pipes (U and V) so it is superscalar level 2. The Pentium Pro processor can dispatch and retire three instructions per clock so it is superscalar level 3.

References

The Alpha 21164A: Continued performance leadership. 1995. Microprocessor Forum. Internal architecture of the Alpha 21164 microprocessor. 1995. CompCon 95.

A 300 MHz quad-issue CMOS RISC microprocessor (21164). 1995. In ISSC 95, pp. 182–183.

A 200 MHz 64 b dual-issue CMOS microprocessor (21064). 1992. In ISSC 92, pp. 106–107.

Hobbit: A high performance, low-power microprocessor. 1993. CompCon 93, pp. 88–95.

MIPS R10000 superscalar microprocessor. 1995. Hot Chips VII.

The impact of dynamic execution techniques on the data path design of the P6 processor. 1995. Hot Chips VII.

A 0.6 µm BiCMOS processor with dynamic execution (P6). 1995. In ISSC 95, pp. 176–177.

A 3.3 v 0.6 µm BiCMOS superscalar processor (Pentium). 1994. In ISSC 94, pp. 202–203.

An overview of the Intel Pentium processor. 1993. In CompCon 93, pp. 60–62.

Superscalar architecture of the P5-×86 next generation processor. 1992. Hot Chips IV.

A 93 MHz x86 microprocessor with on-chip L2 cache controller (N586). 1995. In ISSC 95, pp. 172–173.

The AMD K5 processor. 1995. Hot Chips VII.

The PowerPC620 microprocessor: A high performance superscalar RISC microprocessor. CompCon 95.

A new powerPC microprocessor for the low power computing marker (602). 1995. CompCon 95.

133 MHz 64 b four-issue CMOS microprocessor (620). In ISSC 95. 1995. pp. 174–175.

The powerPC 604 RISC microprocessor. 1994. IEEE Micro. (Oct.).

The powerPC user instruction set architecture. 1994. IEEE Micro. (Oct.).

PowerPC 604. 1994. Hot Chips VI.

The powerPC 603 microprocessor: A low power design for portable applications. 1994. In CompCon 94, pp. 307–315.

A 3.0 W 75SPECint92 85SPECfp92 superscalar RISC microprocessor (603). 1994. In ISSC 94, pp. 212–214.

601 powerPC microprocessor. 1993. Hot Chips V.

The powerPC 601 microprocessor. 1993. In Compcon 93, pp. 109–116.

The great dark cloud falls: IBM’s choice. In Great Microprocessors of the Past and Present (on-line) Sec. 3. http:// www.cpu info.berkeley. edu.

Further Information

In the fast changing world of the microprocessor, obsolecence is a fact of life. In fact, the marketing data shows that, with each introduction of a new generation, the time spent ramping up a new technology and ramping down the old gets shorter. There is more need for accuracy in design and development to

avoid errors such as Intel experienced with their Pentium Processor with their floating point mathematic computations in 1994. For the small system developer or user of microprocessors, there is an important need to keep abreast of newer, more enhanced chips with better capabilities. Luckily, there is an excellent way to keep up to date.

The CPU Information Center at the University of California, Berkeley maintains an excellent, up to date compilation of microprocessors and microcontrollers, their architectures, and specifications on the World Wide Web (WWW). The site includes chip size and pinout information, tabular comparisons of microprocessor performance and architecture (such as that shown in the tables in this chapter), and references. It is updated regularly and serves as an excellent source for the small systems developer. Some of the information contained in this chapter was obtained and used with permission from that Web site. See http://www. infopad. berkeley.edu for an excellent, up to date summary.